We've been in the midst of a productivity boom around LLM usage in sedentary work for several years now. We're still figuring out where these improvements come from and what the most appropriate ways to use this technology are. Within these discussions, there are enthusiasts who claim this increase is 10x, or even 100x (a number divorced from reality, considering that in one week you'd be doing more than you did in an entire year before).

Either way, coupled with the necessity of using these technologies (the economy seems to depend on it and, whether we like it or not, they'll be part of every possible product) and the claims (some unrealistic, others more sober), we're always anxious with FOMO about missing the next new tool that'll make you more productive, work less, or might even replace you in six months.

While the technology seems impressive from an engineering standpoint, the real-world use and benefits of these tools remain ambiguous and nascent. Because that's how science works: extraordinary claims require extraordinary evidence. And with only a few years of collected data, it's impossible to reach a robust conclusion and widespread consensus, especially when dealing with an area where measuring the quantities themselves isn't simple. Productivity, well-being, and work aren't universal definitions, they can be slightly different across cultures, industries, and contexts.

For this article, I've grouped the definitions used in the literature into three broad families:

- The classic definition: treats productivity as the ratio between output and input (efficiency and effectiveness).

- The loss-based definition: common in occupational health, which measures it through absenteeism and presenteeism.

- Technical software engineering definitions: ranging from lines of code per hour to multidimensional models like the SPACE framework (which incorporates satisfaction, performance, activity, collaboration, and flow).

This plurality of definitions is, in itself, part of the problem: it makes comparing studies difficult and is one of the reasons why consensus takes so long to form.

One way to understand the frenzy around productivity is to look at the years of consolidated science we have in the field. While the use of LLMs doesn't bring robust evidence yet, white-collar work and software development have been researched for decades. Productivity and the ways to understand this quantity are closer to consensus, basically because they've accumulated more research time.

Pragmatically, analyzing productivity isn't an easy task. The quantity being measured isn't a physical law that exists in nature. When trying to isolate this quantity, we use the most diverse methods, often in ways slightly different from before. That's why it's very complex to aggregate evidence and understand real impacts. The most effective way is to wait for the extensiveness of scientific literature to allow us to comprehensively understand the problem being studied.

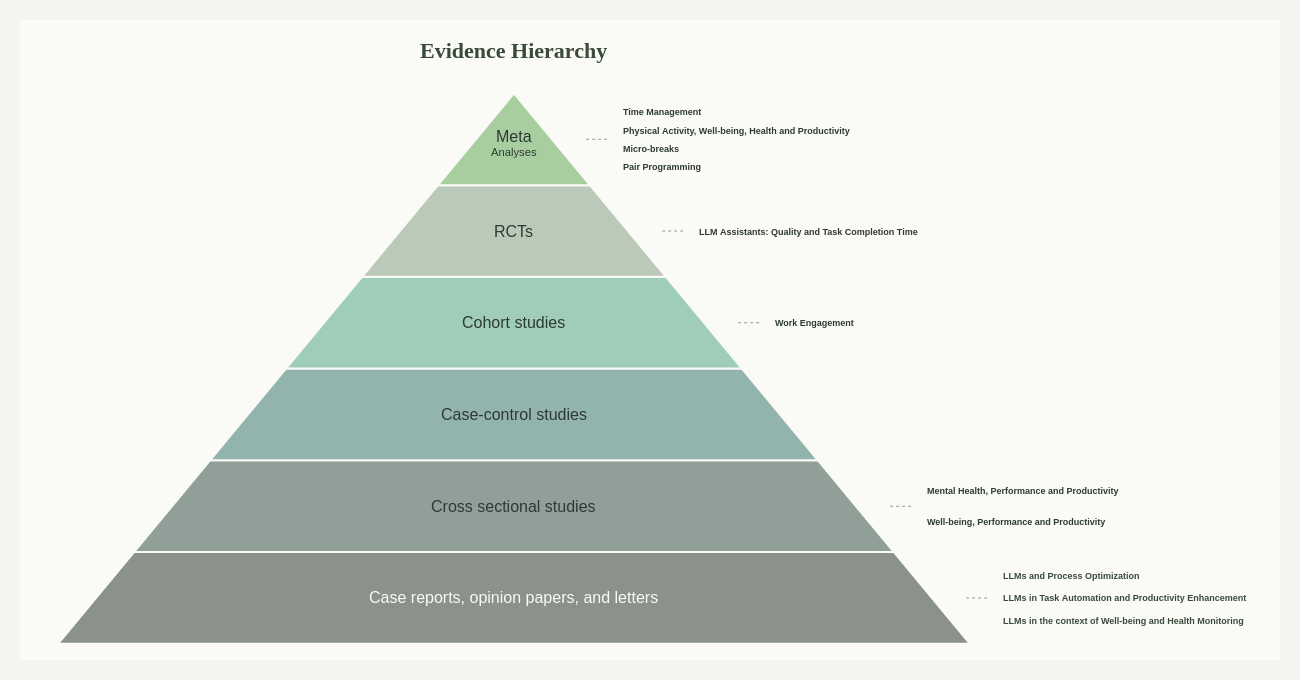

Traversing this pyramid from top to bottom, from areas with the most consolidated evidence to those where research is still forming, we have:

Office Work: The Top of the Pyramid

When we look at the most consolidated research literature on office work, the consensus on some topics has high confidence. With over 30 years of research on certain topics, we already have accepted and consensual understandings in the field, robust meta-analyses that occupy the top of the evidence pyramid.

For example, the use of micro-breaks to improve well-being and productivity throughout the day has effects documented with considerable solidity. However, the literature shows that the effect of breaks on productivity depends directly on the type of task: while micro-breaks (up to 10 minutes) improve performance on routine and creative tasks, they don't seem sufficient to recover cognitive capacity in highly mentally demanding tasks, which require longer periods of disconnection. Still, they're a universal "remedy" for increasing vigor and reducing fatigue.

Time management is another widely validated concept, but with a surprising finding: contrary to popular belief that it primarily serves to boost performance, time management is fundamentally an enhancer of well-being. Studies reveal that time management's impact on life satisfaction is significantly greater than its impact on professional performance.

Another counterintuitive finding from this solid evidence base concerns office design. Despite the popularity of open-plan layouts, idealized to promote collaboration, population data shows that working in open offices (with more than 6 people) increases sick leave due to absenteeism by up to 62% when compared to individual offices, due to higher levels of distraction, cognitive stress, and loss of privacy.

Software Development: Middle Layers

With a bit less research time, productivity in software development occupies the middle layers of the pyramid. Some categories are already in the systematic review area, where we have a good direction for expected conclusions, while others remain in the range of randomized studies. In this area, there are well-established concepts. For example, the understanding that human factors (such as ability and expertise) explain performance variations on the order of 10x between individuals, surpassing strictly technical factors. Well-being acts as a predictor not just of career outcomes but must be understood multidimensionally: it encompasses hedonic factors (being happy and satisfied), eudaimonic factors (sense of competence, autonomy, and impact), and states of flow and intense engagement.

Additionally, the literature has always documented the trade-off that "you can't be faster, better, and cheaper" at the same time. This is evident in meta-analyses of agile practices like pair programming, which only generates code with higher correctness (quality) on highly complex tasks, demanding in return a significantly higher effort cost (paid hours). Similarly, time pressure to accelerate deliveries creates a bias: it increases efficiency in the very short term but severely degrades quality, forcing developers to skip tests and documentation, resulting in more bugs in the future.

The main current bet is trying to show that this historical negative correlation between speed and code quality can begin to reverse with the use of LLM-based assistants, decoupling the speed of code production from its quality.

LLM-Based Tools: The Base of the Pyramid

As for the area of LLM usage, which has accumulated considerably less research time, studies still occupy the initial levels of the pyramid, with cohort studies and attempts to structure randomized control studies to understand the causality of these tools' productivity improvements.

Due to the field's nascent state, studies with LLMs present mixed results. While industry use cases report extreme gains, such as reducing effort in development cycles from 75 to just 22 person-days (a 71% gain), others point to a decrease in productivity.

This potential, however, doesn't materialize uniformly. The same literature that documents speed gains also records mechanisms by which the tool can reverse these gains, or even reduce net productivity, depending on the developer's profile and task complexity:

- Role Change (From "Coder" to "Reviewer"): The time gained in code generation is often lost verifying, editing, and debugging the AI-generated code. If the task is too complex, this productivity return diminishes significantly.

- Automation Complacency: Especially among novice developers, the uncritical acceptance of AI-generated code creates a risk of overconfidence and loss of critical thinking, potentially introducing vulnerabilities and quality problems in the code.

- Drop in Team Collaboration: It's observed that excessive dependence on LLMs makes developers prefer consulting a chatbot over a colleague, which threatens team collaboration and the knowledge-sharing moments inherent to traditional software engineering.

- Flow Disruption: Ironically, although they promise to keep developers focused, interactive assistants (like Copilot) can break the flow state by offering unwanted, incorrect, or suggestions too fast to be understood.

Due to the lack of methodology and reference studies in the area (for now), many of these studies aren't comparable and don't have the rigor necessary to climb the more robust levels of the evidence pyramid, showing that AI's effectiveness will depend largely on how organizations adapt their quality metrics and their teams' cognitive skills.

When we put all this information together, what we get is the following pyramid:

Despite individual characteristics being predominant in defining the productivity and performance benefits of these adopted methods, the way to apply this knowledge happens preferentially collectively: through the use of tools, well-being and health policies, and employee incentives.

While we have well-established categories at the top of the pyramid, where we know the required investment and expected return for each initiative, companies' investments seem to consistently gravitate toward categories at lower levels of the pyramid. There, the investment is uncertain (we don't know what's really necessary to obtain results similar to the study's) and the return also proves uncertain (results may not be replicable due to lack of methodology and exhaustive studies on how to improve them).

When analyzing this data and making an evidence-based decision, it's not about choosing between investing in ergonomics or AI tools, in practice, both can coexist. The issue is one of proportionality and sequence. A $1,000 investment in ergonomics, time management training, work methodologies, and physical and mental well-being has a return documented by decades of research, whether in reducing absenteeism or improving performance and productivity. An equivalent investment in LLM-based assistance tools has, at this moment, a return that cannot be quantified beyond anecdotal experience.

It seems that, seeing this trade of an investment with documented returns for one with uncertain returns, companies, besides speculating with their employees' performance, are seeking a productivity that pragmatically doesn't exist (at least within science's current understanding). They're magically seeking an idea of productivity that would solve all the company's other problems. Because rarely is the slowest part of a process in an office the execution. If we return to behavior-based productivity definitions, those that measure motivation, proactivity, and task involvement, it becomes evident that companies' bottleneck is in the stages before execution: planning, alignment between teams, and decision-making. These are dimensions that the SPACE framework classifies under Communication and Collaboration, and which no code generation assistant addresses directly. Investing in something that will knowingly improve Activity (more code generated) but won't solve this bottleneck in Collaboration and organizational Performance doesn't seem to be the most well-founded bet.

References

- Tarro, L., et al. (2020). Effectiveness of Workplace Interventions for Improving Absenteeism, Productivity, and Work Ability of Employees: A Systematic Review and Meta-Analysis of Randomized Controlled Trials. International Journal of Environmental Research and Public Health.

- Albulescu, P., et al. (2022). "Give me a break!" A systematic review and meta-analysis on the efficacy of micro-breaks for increasing well-being and performance. PLoS ONE.

- Knight, C., Patterson, M., & Dawson, J. (2017). Building work engagement: A systematic review and meta-analysis investigating the effectiveness of work engagement interventions. Journal of Organizational Behavior.

- Conn, V. S., et al. (2009). Meta-Analysis of Workplace Physical Activity Interventions. American Journal of Preventive Medicine.

- Aeon, B., Faber, A., & Panaccio, A. (2021). Does time management work? A meta-analysis. PLoS ONE.

- Hannay, J. E., et al. (2009). The effectiveness of pair programming: A meta-analysis. Information and Software Technology.

- Richardson, A., et al. (2017). Office design and health: a systematic review. New Zealand Medical Journal.

- Anakpo, G., Nqwayibana, Z., & Mishi, S. (2023). The Impact of Work-from-Home on Employee Performance and Productivity: A Systematic Review. Sustainability.

- de Oliveira, C., et al. (2022). The Role of Mental Health on Workplace Productivity: A Critical Review of the Literature. Applied Health Economics and Health Policy.

- Wagner, S., & Ruhe, M. (2018). A Systematic Review of Productivity Factors in Software Development. arXiv preprint cs.SE.

- Kuutila, M., et al. (2020). Time Pressure in Software Engineering: A Systematic Review. arXiv preprint cs.SE.

- Godliauskas, P., & Šmite, D. (2025). The well-being of software engineers: a systematic literature review and a theory. Empirical Software Engineering.

- Mohamed, A., Assi, M., & Guizani, M. (2025). The Impact of LLM-Assistants on Software Developer Productivity: A Systematic Literature Review. arXiv preprint cs.SE.

- García-Madurga, M.-Á., et al. (2024). The Role of Artificial Intelligence in Improving Workplace Well-Being: A Systematic Review. Businesses.

- Babashahi, L., et al. (2024). AI in the Workplace: A Systematic Review of Skill Transformation in the Industry. Administrative Sciences.

- Al Naqbi, H., Bahroun, Z., & Ahmed, V. (2024). Enhancing Work Productivity through Generative Artificial Intelligence: A Comprehensive Literature Review. Sustainability.

- Peterman, J. E., et al. (2019). A cluster randomized controlled trial to reduce office workers' sitting time: effect on productivity outcomes. Scandinavian Journal of Work, Environment & Health.

- Egan, T. M., & Song, Z. (2008). Are facilitated mentoring programs beneficial? A randomized experimental field study. Journal of Vocational Behavior.